Claude Managed Agents vs. Enterprise AI Platforms

What Enterprise Leaders Need to Know Before Choosing

Enterprise AI has entered a new phase — one that is less visible but far more consequential than what came before. The first wave was defined by experimentation: building agents, proving out capabilities, and demonstrating value in controlled settings.

That phase is largely over. What comes next is harder.

The question facing enterprise leaders today is no longer whether AI agents can be deployed. It is whether they can be governed, secured, and scaled without introducing risk that the business cannot absorb. That distinction — between deploying AI and operating it as enterprise infrastructure — is where most organizations are discovering the gap between what they expected and what they actually need.

Claude Managed Agents, released by Anthropic in public beta in April 2026, have accelerated adoption by making agent-based systems more accessible. Sandboxed execution, scoped permissions, and session tracing lower the barrier to entry meaningfully for development teams. That value is real.But as organizations move from pilot to production, a more nuanced picture is emerging. Running agents is not the same as operating an enterprise AI system. And the architectural decisions made at this stage carry consequences that will surface for years.

This post examines both approaches — managed agents and enterprise AI platforms — with the goal of helping enterprise leaders make the right call for their context.

The Simplicity Trap

Managed agents are designed to abstract away infrastructure complexity. Teams focus on building functionality, not managing systems. In early-stage deployments, this feels like a genuine advantage — and often is.

The problem emerges when organizations try to scale that simplicity into production. Managed agents are optimized for task execution, not for the orchestration of complex, interdependent systems. They lack the deeper capabilities that enterprise AI maturity demands: coordinated multi-agent workflows, self-evaluation loops, stateful processes that evolve across extended timeframes, and domain-specific knowledge that must remain within the enterprise boundary.

“What appears to be a platform is often infrastructure streamlined for convenience — but not designed for control.

This is not a criticism of managed agents as a concept. It is a recognition of what they are built for. The gap between ease of entry and enterprise readiness is structural, and it becomes visible precisely when the stakes are highest.

Four Constraints That Define Enterprise Reality

The transition from experimentation to enterprise deployment introduces a set of constraints that are not optional. They are structural requirements — non-negotiable features of how enterprise systems must be built and operated.

1. Data Residency

In any managed cloud environment, data — every tool call, every document processed, every decision logged — flows through vendor-controlled infrastructure. For many use cases, this is an acceptable trade-off. For enterprises operating in regulated industries, it is not.

Organizations handling healthcare records, financial data, proprietary source code, or export-controlled content face strict requirements around where data resides, how long it is retained, and who can access it. The absence of air-gapped deployment options, zero data retention guarantees, or in-country hosting effectively places managed runtimes outside the bounds of compliance for these organizations.

“Data residency is not a configuration option. It is a boundary that either the architecture respects or it doesn’t.

2. Cost at Scale

Consumption-based pricing is intuitive at low volumes. It removes upfront commitment and aligns cost with usage. The problem is that it also scales linearly — and often without the optimization mechanisms enterprises expect from mature infrastructure.

A deployment of twenty agents running eight active hours per day costs in the range of $5,300 per month under current pricing. That figure scales directly with usage, without batch discounts or predictable tiers. For enterprise environments where budget forecasting and cost attribution are operational requirements, this model introduces a level of financial unpredictability that is difficult to justify — particularly as deployments grow.

“At enterprise scale, cost is not just an outcome. It is a design constraint.

3. Vendor Lock-In

AI capabilities, pricing, and model quality are evolving faster than any other technology category. In this environment, architectural flexibility is not a preference — it is a hedge against known uncertainty.

Managed agents, by design, bind workloads to a single model ecosystem. The integration is clean, but the dependency is real. When vendor pricing shifts, when a competing model outperforms on a critical task, or when contractual terms change at renewal, the cost of that lock-in becomes visible. By that point, re-platforming is expensive and disruptive.

“Vendor lock-in rarely hurts at deployment. It surfaces at scale, at renewal, and when the market moves faster than the contract allows.

4. Governance vs. Observability

Session tracing and scoped permissions are a foundation, not a governance framework. As AI systems take on more operational responsibility — influencing decisions with financial, regulatory, or reputational consequences — the gap between visibility and control becomes critical.

Enterprise governance requires role-based access control, multi-tenant isolation, detailed audit trails, and the ability to attribute cost and behavior at the level of granularity a CIO can present to a board. Knowing what happened after the fact is not the same as enforcing what is allowed to happen in the first place.

“Observability tells you what occurred. Governance determines what is permissible.

A Direct Comparison

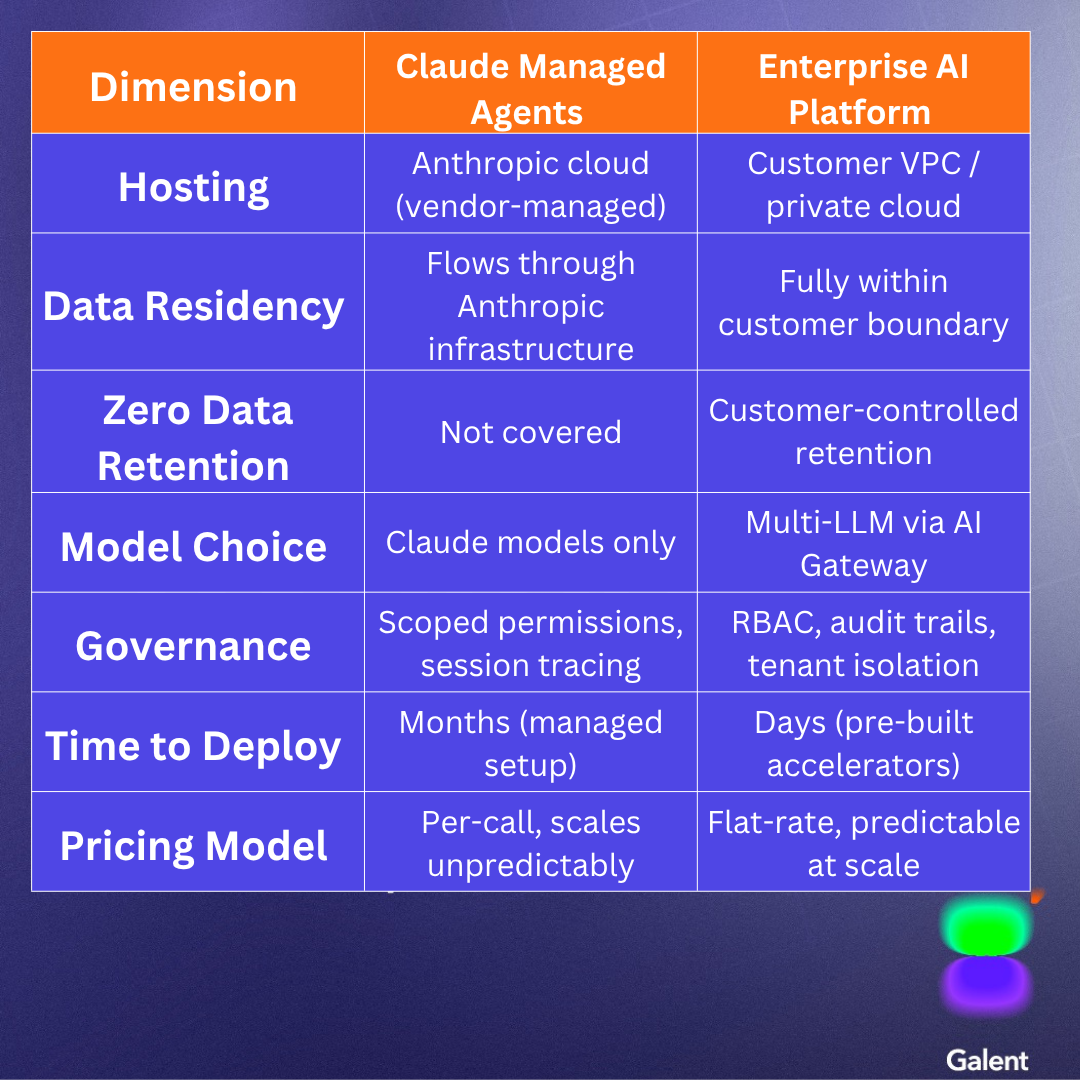

The table below summarizes the key architectural dimensions across both approaches:

The Enterprise Platform Alternative

Enterprise AI platforms are built around a different principle: rather than optimizing for ease of entry, they optimize for control, scalability, and long-term viability. The design philosophy is that enterprises must retain full ownership of their AI systems — where data lives, how it is processed, and how long it is retained

Control as Architecture

Deployment within customer-controlled environments — whether private cloud or VPC — means AI infrastructure aligns with organizational policies and regulatory requirements from day one. Domain customization, including proprietary prompts, fine-tuned retrieval, and client-specific knowledge graphs, stays inside the enterprise boundary rather than flowing through external infrastructure.

Model Independence

An abstraction layer enabling multi-LLM orchestration allows organizations to route tasks across different models based on performance, cost, or compliance requirements. Workloads are not tied to a single vendor’s roadmap. When a better model emerges, or when pricing structures shift, the underlying model can be swapped without re-architecting the orchestration layer.

Governance by Design

Every agent action can be traced, audited, and attributed. Access is controlled at multiple levels. Systems can be segmented for multi-tenant environments. This is not an add-on — it is built into the architecture, which means it is present from the first deployment rather than retrofitted after a compliance review.

Economics That Scale

Predictable, flat-rate pricing structures align with how enterprise procurement actually works. Knowledge and context are reusable. Efficiency improves as systems scale, rather than eroding with it. For organizations that need to forecast AI spend across a fiscal year, this is not a minor detail.

Speed Without Sacrifice

There is a persistent assumption that enterprise-grade control comes at the cost of speed. Pre-built accelerators for application modernization, agent development, and system integration challenge this assumption directly. Time-to-value measured in days rather than months is achievable without compromising governance or scalability.

Is an Enterprise Platform Right for You?

The following is a quick diagnostic. If your organization is checking multiple boxes, a platform approach is not optional — it is necessary.

- Working with regulated or sensitive data (PHI, PII, financial records, proprietary source code, ITAR-controlled content)

- Require strict data residency, zero data retention, or air-gapped deployment options

- Need multi-LLM flexibility to route across Claude, GPT, Gemini, or open-source models

- Building complex, multi-agent workflows or long-running orchestration beyond simple task execution

- Require enterprise governance: RBAC, audit trails, tenant isolation, per-agent cost attribution

- Need predictable, flat-rate pricing aligned to enterprise procurement and budget cycles

- Want faster time-to-value through pre-built accelerators rather than building from scratch

- Need deep customization — prompts, retrieval, knowledge graphs — staying within your boundary

- Require unified observability: LLM traces, cost attribution, and decision logs without third-party tooling

- Want model independence to upgrade or swap models without re-architecting orchestration

- Expect co-engineering support for rollout, change management, and production hardening

A Pragmatic Path Forward

The right answer is not one approach over the other universally. It is about applying each where it creates genuine value.

For enterprise-scale initiatives — particularly those involving regulated data, multi-team deployment, or long-term production workloads — an enterprise AI platform should serve as the default architectural foundation. It provides the control, governance, flexibility, and cost predictability required to operate AI as serious infrastructure.

Managed runtimes like Claude Managed Agents have a real role to play. They are well-suited as targeted accelerators for product-embedded use cases, rapid prototyping, and scenarios where speed of experimentation outweighs the need for deep control. Used this way, they complement rather than compete with a platform-first strategy.

“Build your core on enterprise AI platforms. Use managed agents to accelerate at the edges.

Organizations that treat these as mutually exclusive are limiting themselves unnecessarily. Those that deploy each where it fits — platform as the operating foundation, managed agents as a speed layer at the margins — will move faster, with fewer architectural regrets.

Download Now